Artificial Intelligence is no longer just a scientific frontier, it is a philosophical battleground. As machines grow...

Month: May 2025

Artificial Intelligence has captured the imagination, and the anxiety, of humanity for decades. From the steely logic...

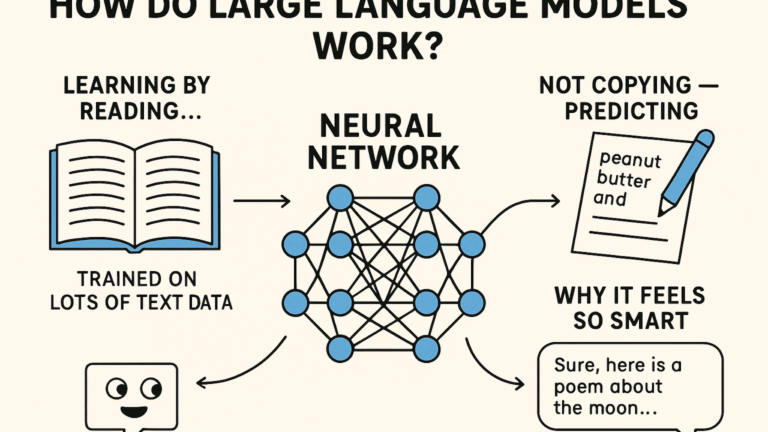

Large Language Models (LLMs) like ChatGPT might seem like magic, you type in a question or a...